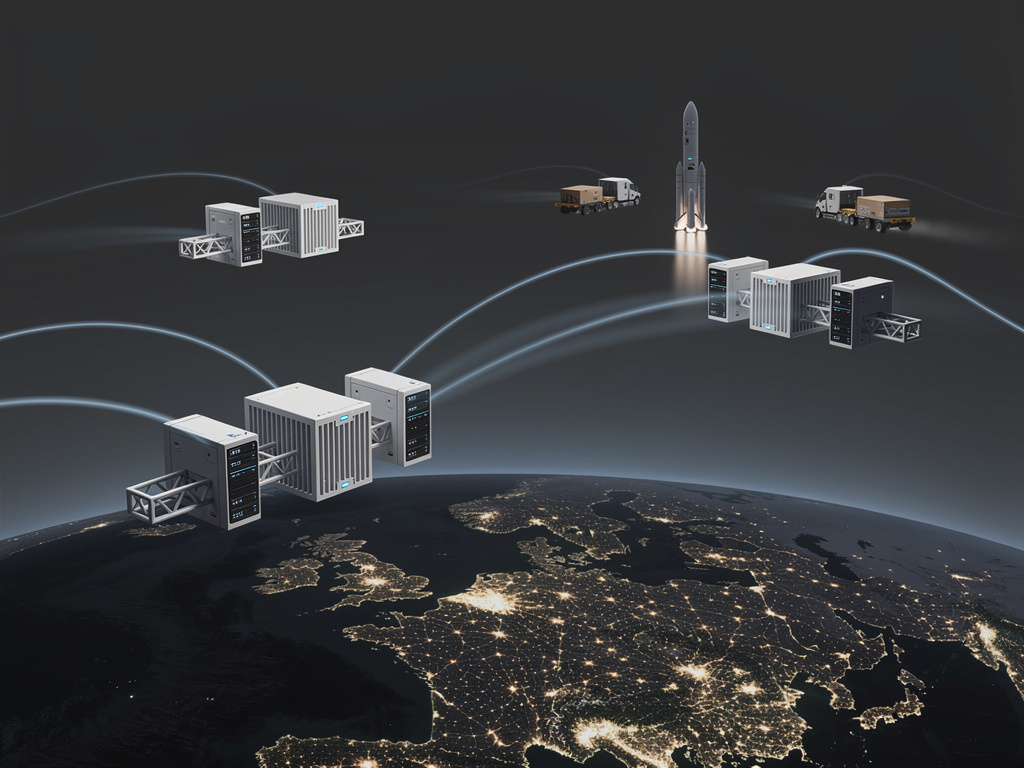

The artificial intelligence (AI) sector is facing a critical challenge as its energy consumption escalates dramatically. Two prominent figures in technology, Sam Altman and Elon Musk, have proposed an innovative solution: building data centers in space. This concept, which once belonged to the realm of science fiction, is now gaining traction among industry leaders. During a recent event, Altman indicated that such data centers could be operational within a few years, not decades, while Musk has echoed similar thoughts, highlighting the decreasing costs associated with launching payloads into orbit through SpaceX‘s Starship rocket.

The Urgent Need for AI’s Energy Solutions

The urgency behind this proposal stems from the staggering energy requirements for AI model training and inference. According to Goldman Sachs, data center power consumption in the United States is projected to more than double by 2030, primarily due to AI workloads. The International Energy Agency has forecasted that global data center electricity use could reach 1,000 terawatt-hours annually by 2026, equivalent to Japan’s total electricity consumption.

This surge in demand has already created significant bottlenecks. In Northern Virginia, where data centers are concentrated, utility provider Dominion Energy has warned of potential power shortages. Similar constraints have been reported in Texas, Ireland, and the Netherlands. In response, major tech companies like Microsoft, Google, and Amazon are signing long-term power purchase agreements and exploring alternative energy sources such as nuclear and geothermal. Yet, these solutions take time to implement, while the demand for computing power continues to grow exponentially.

Solar Power Potential in Space

Altman advocates for the feasibility of space-based data centers, emphasizing their unique advantage: uninterrupted sunlight. In orbit, solar panels can generate five to ten times more energy per square meter than those on Earth due to the absence of atmospheric interference. He has suggested that the concept could start materializing around 2026 or 2027, with the rapid decline in launch costs as a pivotal factor. SpaceX’s Starship aims to cut the cost per kilogram to orbit to around $10, a significant reduction from current prices.

Musk’s dual role as both a provider and consumer of launch services places him at the forefront of this evolving discussion. His company xAI is constructing massive AI training clusters, with the Colossus supercomputer in Memphis already straining local power supplies. Musk has pointed out that Starlink, SpaceX’s satellite constellation, demonstrates the viability of deploying sophisticated electronics in low Earth orbit, paving the way for the potential transition to compute satellites.

While the vision is ambitious, several engineering challenges remain. Cooling computer hardware in space poses a different set of problems compared to Earth, as there is no air to dissipate heat. Systems will need to rely on radiative cooling, which is less effective and demands larger surface areas. Furthermore, radiation exposure can degrade electronics over time, necessitating either robust components or frequent replacements.

Emerging Startups and Economic Viability

Interest in space-based computing is not limited to Altman and Musk. Several startups are already pursuing plans to build computing infrastructure in orbit. Lumen Orbit, a Y Combinator-backed company, is developing satellites tailored for AI training workloads. It argues that the combination of unlimited solar power and the cold environment of space can mitigate some cooling challenges.

Another notable player is Axiom Space, which is constructing commercial space station modules that could host computing hardware. The European Space Agency has also invested in research regarding orbital data processing, aiming to reduce the environmental footprint of terrestrial digital infrastructure.

Despite the advancements, the primary question remains economic rather than technical. Currently, the cost of launching and maintaining computing hardware in space is significantly higher than that of ground-based facilities. A high-performance AI server rack can cost tens of thousands of dollars, while launching it into orbit via a Falcon 9 rocket can escalate costs by hundreds of thousands more. However, if SpaceX’s Starship achieves its targeted cost reductions, the economics could shift favorably.

As energy costs for data centers rise due to increasing demand, the potential for space-based solutions may become more viable. If launch costs decrease to $10 per kilogram, the crossover point where space becomes a competitive option could arrive sooner than anticipated.

Regulatory and Geopolitical Considerations

The emergence of space-based data centers also introduces complex regulatory questions. Issues regarding jurisdiction over data processed in orbit, spectrum management, and liability for space debris need to be addressed. Geopolitically, controlling significant computing capacity in space could provide countries or companies with strategic advantages, intensifying competition between nations, particularly the United States and China.

The timeline for realizing these ambitious plans is measured in years, not decades. Altman and Musk are not projecting timelines into the 2040s or 2050s but rather suggesting potential operational capabilities by the late 2020s. Their public commitment to such near-term goals has the potential to galvanize investment and policy focus within the industry.

The rapid advancements in AI technology have repeatedly proven that previously ambitious timelines can be condensed. Just five years ago, the notion of a chatbot passing a bar exam seemed far-fetched, yet it became a reality. With the increasing urgency of the electricity challenge, the AI industry may soon find itself looking to the skies for solutions.