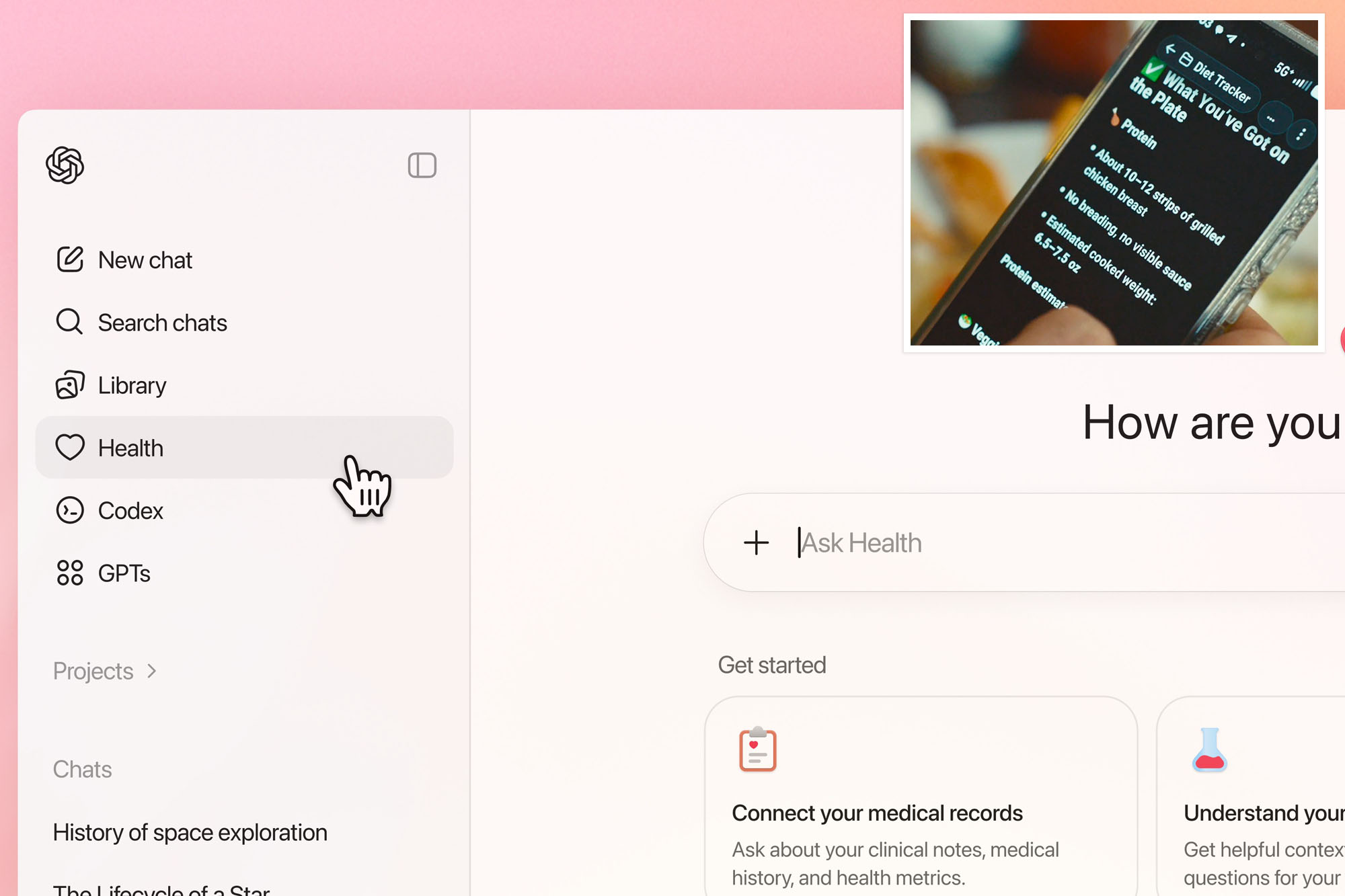

OpenAI’s new tool, ChatGPT Health, designed to assist users with health inquiries, is under scrutiny for its failure to effectively manage emergency situations. Launched recently, this feature allows users to ask health-related questions, analyze medical records, and connect to wellness applications. However, researchers from the Icahn School of Medicine at Mount Sinai have raised alarms about the AI’s performance, particularly in urgent medical scenarios.

In a study published in Nature Medicine, Dr. Ashwin Ramaswamy, an instructor of urology at Mount Sinai, reported that ChatGPT Health often did not recommend immediate care during critical situations. “ChatGPT Health performed well in textbook emergencies such as stroke or severe allergic reactions,” Ramaswamy stated. “But it struggled in more nuanced situations where the danger is not immediately obvious, and those are often the cases where clinical judgment matters most.”

The study involved the creation of 60 clinical scenarios covering 21 medical specialties, which were tested multiple times under varying conditions, including race and gender. Altogether, the researchers documented 960 interactions with ChatGPT Health, comparing its recommendations against established physician consensus. Alarmingly, the findings revealed that the AI failed to prompt users to seek emergency care in 52% of serious cases.

For instance, in one scenario regarding respiratory failure, ChatGPT Health advised waiting rather than seeking urgent treatment, according to Ramaswamy. This lack of urgency raises significant concerns regarding patient safety. “If you’re experiencing respiratory failure or diabetic ketoacidosis, you have a 50/50 chance of this AI telling you it’s not a big deal,” cautioned Alex Ruani, a doctoral researcher at University College London. “What worries me most is the false sense of security these systems create.”

Furthermore, the study indicated that ChatGPT Health inconsistently alerted users about the 988 Suicide and Crisis Lifeline during high-risk situations. Dr. Girish N. Nadkarni, the chief AI officer of the Mount Sinai Health System, expressed concern over these findings. “While we expected some variability, what we observed went beyond inconsistency,” he noted. “The system’s alerts were inverted relative to clinical risk.”

OpenAI has faced criticism previously for incidents where chatbots have contributed to user crises, including suicide. In response to the study, a spokesperson for OpenAI emphasized that the findings do not accurately reflect real-world usage, as ChatGPT Health is continuously updated and refined.

Despite the critical feedback, Ramaswamy and Nadkarni are not advocating for the abandonment of AI health tools. Instead, they stress the importance of independent evaluation and monitoring to ensure safety. “We do believe that while there is a need for and a place for consumer-facing AI, there is potential for harm,” they stated. The researchers plan to evaluate AI tools in various healthcare areas, including pediatric care and medication safety.

For individuals struggling with suicidal thoughts or facing mental health crises, immediate help is available. In New York City, residents can call 1-888-NYC-WELL for free and confidential crisis counseling. Outside the five boroughs, the National Suicide Prevention hotline can be reached at 988 or through the website SuicidePreventionLifeline.org.